You can create export jobs to export data from Datameer X to other connections such as a database, remote file server, or export results to a third-party BI (business intelligence) software package. Exported data is the result from executing analyses in a workbook on the original data set. You can initiate a one-time manual export, or you can configure the job to run each time the workbook is updated or at a specific time interval.

It is also possible to download data from Datameer X without using an export job.

Before you can export data, you must create a workbook. Once the workbook has executed, you can export data from one of the saved sheets in that workbook.

Exporting data is only available in Datameer's Enterprise product.

Select to export a worksheet to one of the following file formats:

Only the data from a saved workbook sheet is available for export.

To export data:

Select which connection the data should be exported to, either an existing connection or create a new one. Select to export the data as a CSV file, an AVRO file, a Parquet file, or Tableau (TDSX) file. Then click Next.

There are additional settings if the file is being exported as a CSV. Select whether column headers should be contained in the first row, and how delimiter, quote, and escape characters should be defined.

If you are exporting a float value to a .CSV, an additional trailing zero (.0) is added following the original value. Leading zeros (0.) are removed if the integer part of the float value is larger than zero.

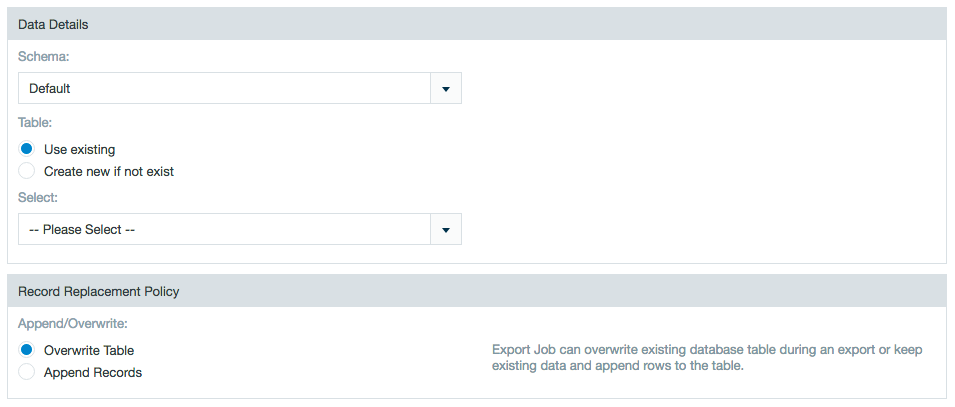

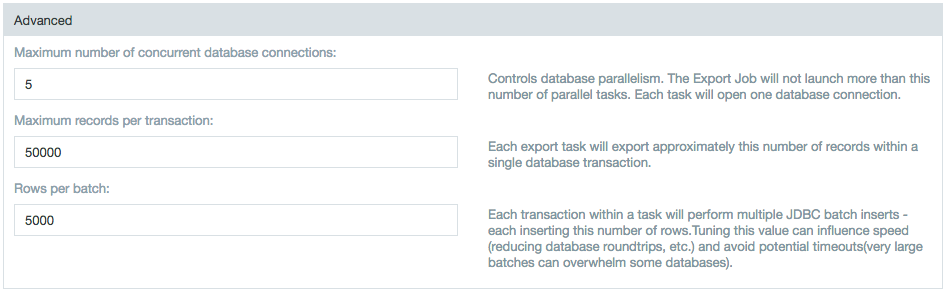

If you export to a database such as MySQL, you must select a table and select either Use Existing Table to overwrite the database table or Create new if it doesn't exist to add the table. If you use an existing table with a large amount of previous data, the overall export might be slow as it first needs to delete the old data. However, if you create a new table, delete statements aren't used and performance might be improved. There is a hard limit of 1,000 entries.

An additional requirement for exporting with SFTP is that the user creating the export job must have permissions to create a folder (mkdir) on the SFTP server. This permission is required even when the folder on the SFTP server already exists.

When exporting to a database, the database connector is associated with a user name. This database user must have write and/or create permissions on the database itself in order to complete the export job.

When exporting data to DB2 or to an Oracle connection, there are limitations on the number of characters contained in string data fields.

Attempting to export data fields containing a larger number of characters results in dropped records.

If the Use existing option is selected Datameer X doesn't create a primary key column with name "ID". (i.e., The workbook must be aware of the ID column. All columns from the destination table (can't be null) must be mapped to a column of the workbook.)

Append Mode

Overwrite Mode

Tips:

|

If the Create new if not exist option is selected Datameer X creates an additional column with name "ID" (primary key). When the database supports auto increment, the database computes the value of this column.

Example: Netezza doesn't support auto increment. In this case Datameer X uses a sequence database object to generate the ID. Specific for Netezza: the name of the sequence used is <tablename>_seq.

Append Mode

Overwrite Mode

Tip:

|

|

Netezza export jobs have the option of storing table and column identifiers as case-insensitive (regular identifiers) or case-sensitive (delimited identifiers). Prior to Datameer X v6.2, all identifiers were stored as case-sensitive. As of Datameer X v6.2, the default setting for identifiers in Netezza export jobs is set as case-insensitive. Previous Netezza export jobs case sensitivity settings can be change within the export job wizard. The schema for the database is always case-sensitive. Changing this setting might create a new table in Netezza.

|

Select which fields to include. Clear any fields that should be excluded. Select whether empty fields can be accepted.

Tips:

|

By default, the column mapping of the export job is based on the columns of the current worksheet being exported. When editing and/or overwriting an export job, it is possible for the column mapping to be based on the the previous export job mapping instead of the current worksheet column mapping. This can be configured in the default.properties file.

# Enables the workbook columns to synchronize with export wizard mapping. # If enabled, the export wizard displays the workbook columns on the mapping step. # Otherwise, the saved mapping from the database is used on the export wizard's mapping step. export.file.inherit-workbook-column-names.enabled=true export.db.inherit-workbook-column-names.enabled=true export.other.inherit-workbook-column-names.enabled=true |

Select how to handle invalid records, and click Next.

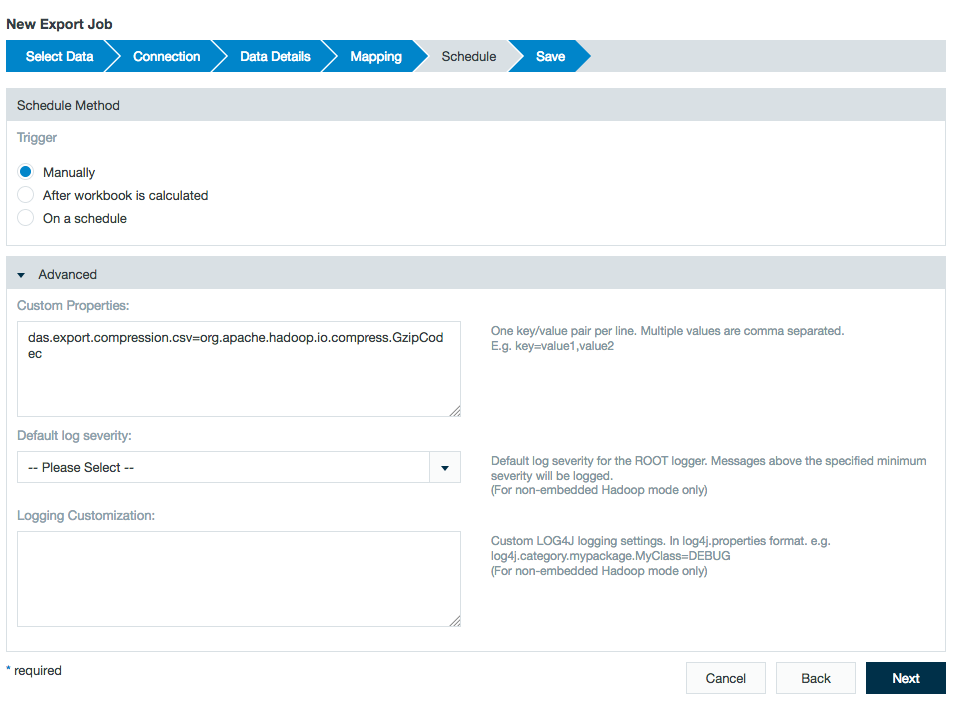

To export a file as a compressed CSV:

Under the Schedule section, open the advanced settings and enter the following property in the Custom Properties field:

das.export.compression.csv=org.apache.hadoop.io.compress.<codec>Codec |

More information on <codec> values for Gzip, Default (deflate), Snappy, etc. are found on Using Compression with Hadoop and Datameer.

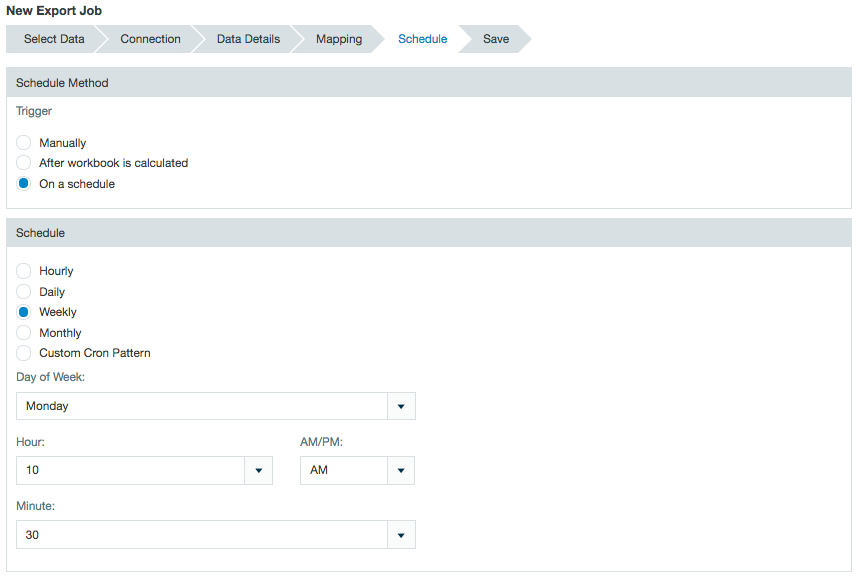

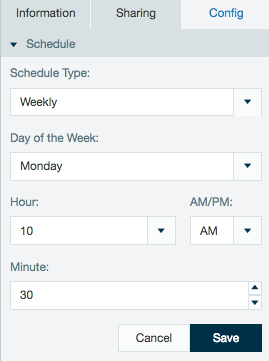

You have a great deal of flexibility in choosing when jobs are run. You can choose to run them manually, after a workbook runs, or at a interval you specify.

The schedule of an export can also be viewed or edited from the inspector in the file browser.

Schedules created with non complex cron patterns are converted automatically in the inspector. Select Schedule Type and Custom from the drop down menu to view or edit the schedule cron pattern.

Only the data from a saved workbook sheet is available for export.

To edit export job settings:

To create a copy of an existing export job:

A duplicate is created and is named "copy of ..." and the name of the original export job.

To run an export job:

Depending on the amount of data, this process may take awhile.

The default configuration has Hadoop distributing the export job to increase performance. The number of split files depends on the amount of data in the export. Each of the split files is exported with an attached sequence number starting with _00000.

It is possible to configure the export to go to a single file but isn't recommended unless necessary as it may cause performance slowdowns with larger files.

Export jobs run as a single task if the source sheet is sorted. The sorted sheet can be generated in parallel in the workbook, but the export job forces the job to run as a single task. To get around this, create a filter on the primary export sheet restricting it to a specific range and select the option to create this filter in a new sheet. Do this multiple times until all of the data is filtered out into separate sheets. Once done, run export jobs against these newly created sheets. |

Only the export job is deleted, not the original data.

To delete an export job:

To edit an export job's permissions: