When you import data, you import it into a connection, which is a collection of data from various types of files and databases, and then create a workbook that uses data from multiple sources (for example, a user list from a database and an Apache error log file). See Supported External Data Types and Sources for information about the types of databases and files that you can import into Datameer.

Once the connection is set up, you create import jobs to import the data you want to use. You can also edit, rename, create a copy, run, view the full data, view the details and information, or delete an existing import job.

See Define File Path Range to learn how to use a date range to limit which files get imported.

Obfuscation is available with Datameer's Advanced Governance module. |

| Custom Property | Description | Default Value |

|---|---|---|

| das.conductor.default.record.sample.size |

| 1,000 |

To create an Import job right-click in the Browser and select "Create New → Import Job".

Click on "Select Connection", select the connection and confirm with "Select".

Select the file type and click "Next".

The fields on the 'Data Details' tab depend on the type of the file, however, there are several fields in common. In the 'Encryption' section, enter all columns to obfuscated with a space between the names.

Note that when a column is obfuscated, that data is never pulled into Datameer.

See the following sections for additional details about importing each of the file types:

XML data: specify the file or folder, the root element, container element, and XPath expressions for the fields you would

For all types, you can:

|

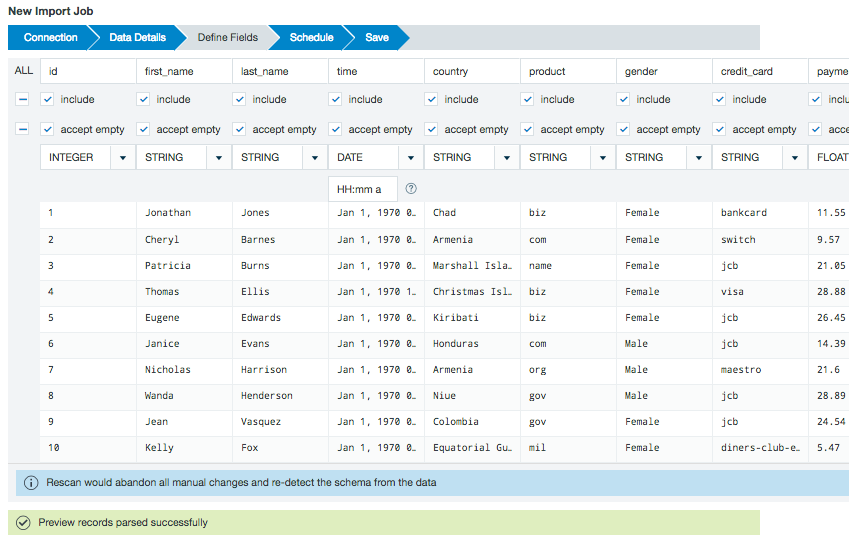

View a sample of the data set to confirm this is the data source you want to use. Use the checkboxes to select which fields to import into Datameer. The accept empty checkbox allows you to specify if NULL and empty values are used or dropped upon import. Verify or select a data type for each column from the drop down menu. You can specify the format for date type fields. Click the question mark icon in the data format field to see a complete list of supported date pattern formats.

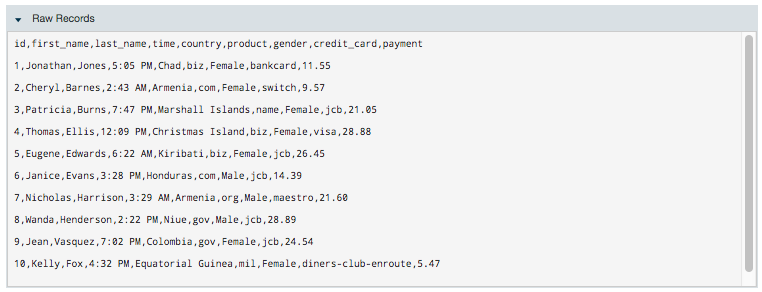

The Raw Records section shows how your data is viewed by Datameer X before the import.

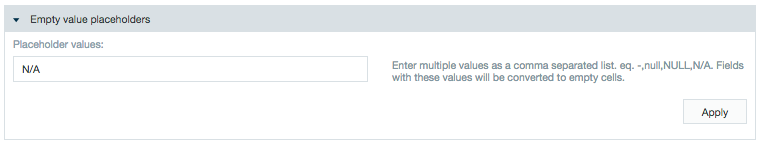

The Empty value placeholders section is a feature giving you the ability to assign specific values as /wiki/spaces/DASSB70/pages/33036123891. Values added here aren't imported into Datameer.

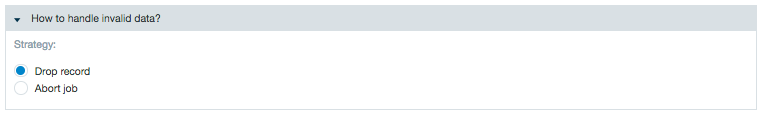

The How to handle invalid data? section lets you decide how to proceed if part of a /wiki/spaces/DASSB70/pages/33036123891 doesn't fit with the defined schema during import.

Selecting the option to drop the record removes the entire record from the import job. The option to abort the job stops the import job when an invalid record is detected.

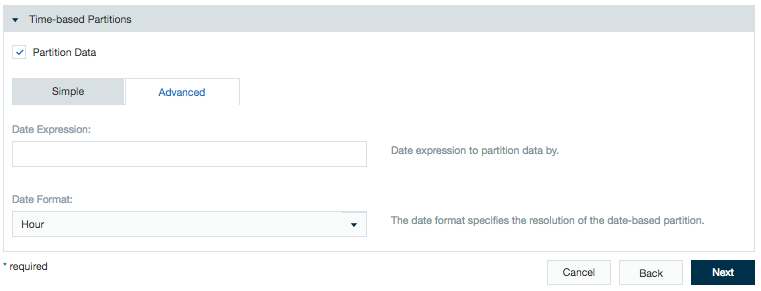

You can partition your data using date parameters. When this data is loaded into a workbook, you can choose to run your calculations on all or on just a part of your data. Also if you decide to export data, you can choose to export all or just a part of your data.

Click Next.

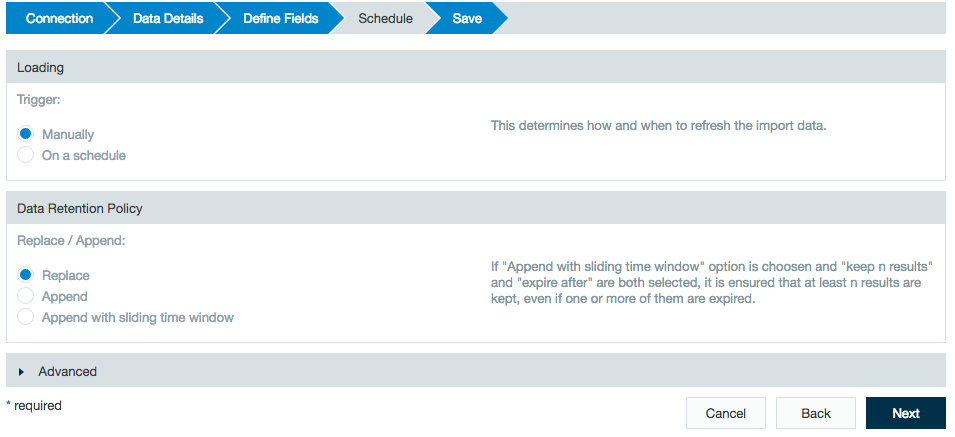

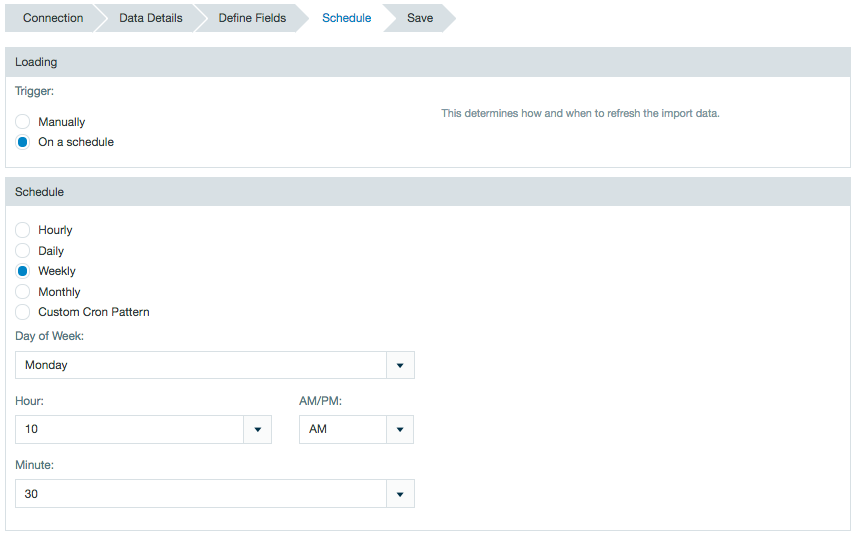

Define the schedule details.

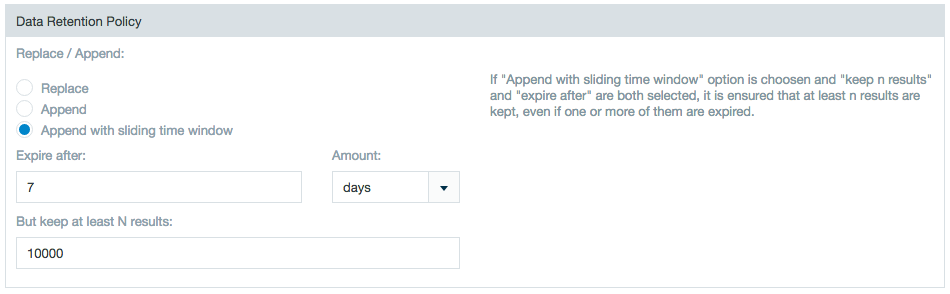

In the Loading section, select Manually to rerun the import job in order to update or On a schedule to run the import job update at a specified time. In the Data Retention Policy section choose whether to replace new updated data or to append it to existing data when updating an import job. You also have the option to choose Append with sliding time window to define a range during which the update expires and how many results to keep.

The appended import jobs can contain additional or fewer columns than the previously run import job. When the schema is rescanned, Datameer X notices that columns have changed so no data is lost. However, data can be lost due to append changes in these circumstances: - If a column name has been renamed then the schema uses the column with the new name and deletes data from the old column name. - If the data type of a column has been changed - If a change in the partition schema is made the data resets starting from the new change |

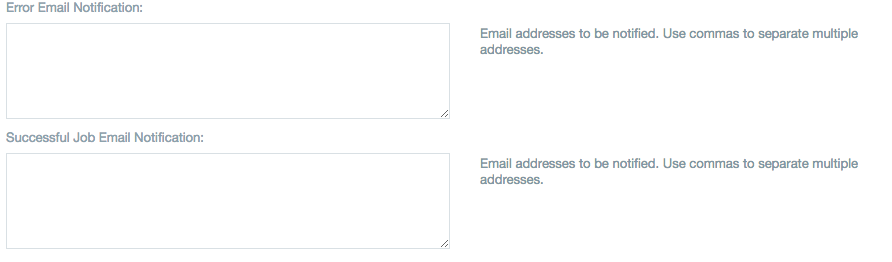

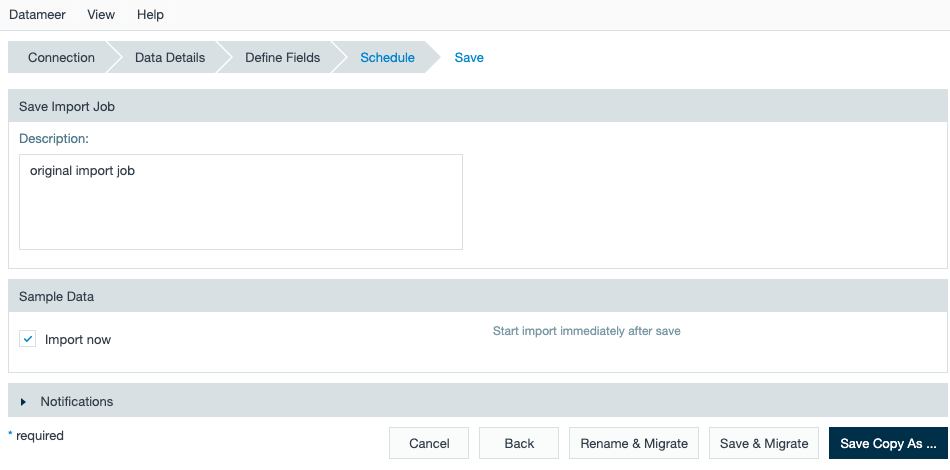

Add a description, name the file, click the checkbox to start the import immediately if desired, and click Save. You can also specify notification emails to be sent for any error messages and when a job has completed successfully. Use a comma to separate multiple email addresses. The maximum character count in these fields is 255.

Note: Sample Regex pattern for importing data: (\S+) (\S+) (\S+) (\S+) (\S+) See Importing with Regular Expressions to learn more. |

Click Raw Records to view an expanded sample of the raw data not in tabular format. Click Raw Records again to hide the raw data.

Some of these settings can also be accessed through the Save Workbook settings. The Save Workbook settings let you specify when jobs are run, how error handling should be done and specify who gets notified, and lets you specify what data is saved with the workbook and how much historical data (if any) is saved. See Configuring Workbook Settings to learn details about each of the settings.

To view and edit the job settings through the Import Data view:

To view and edit the job settings through the workbook view:

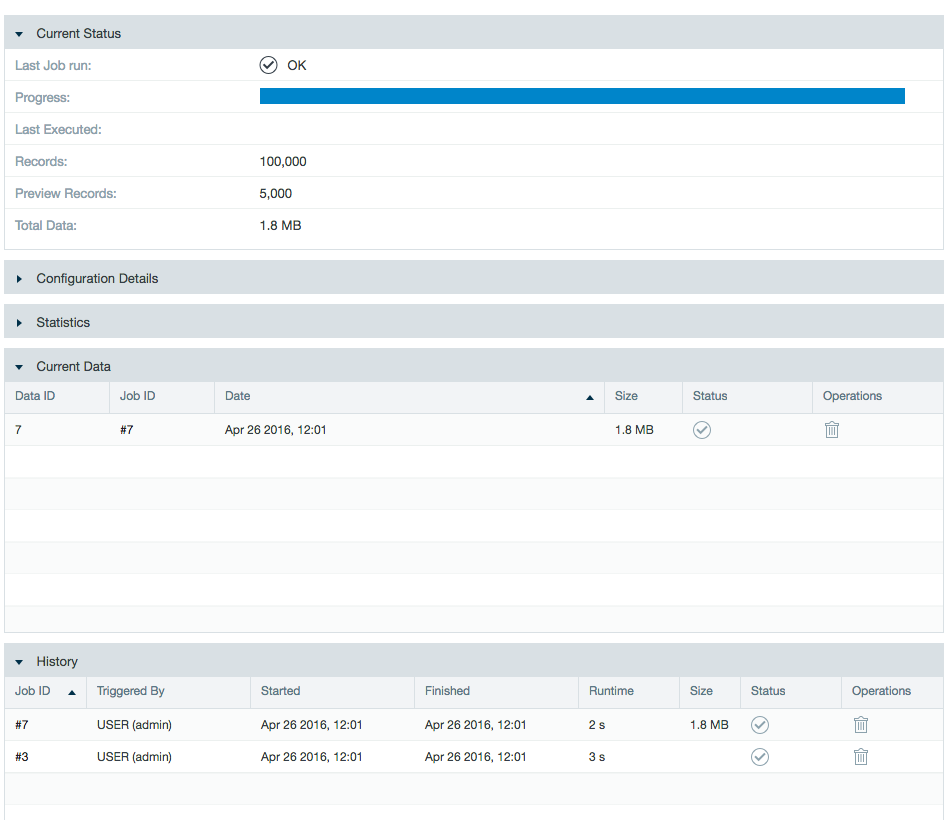

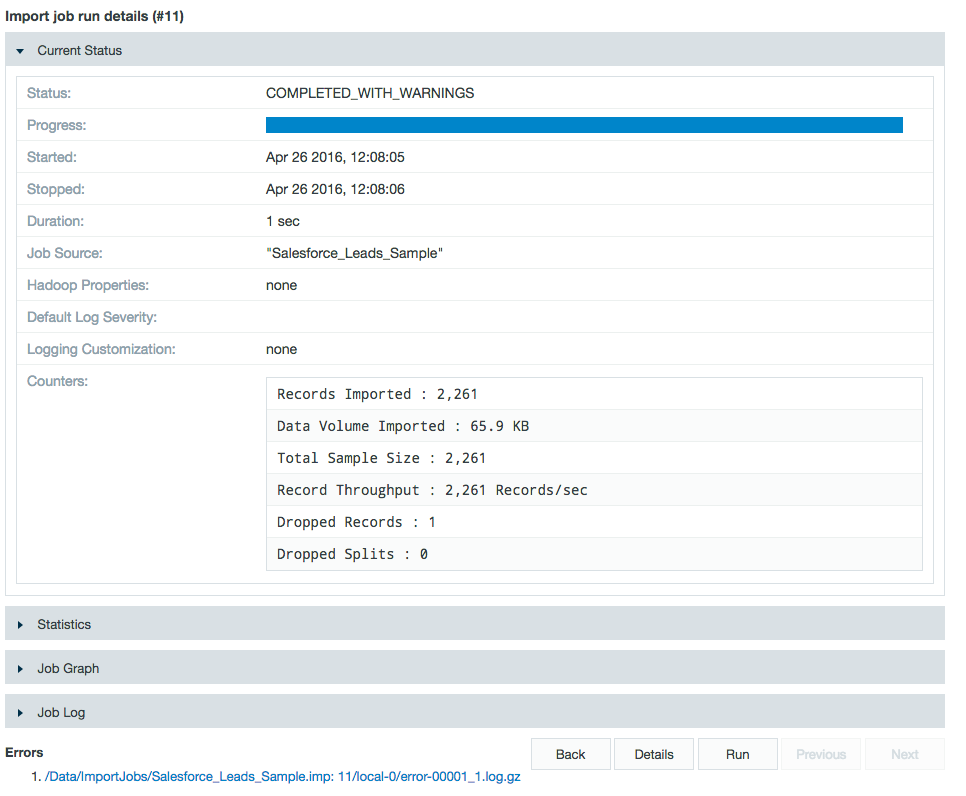

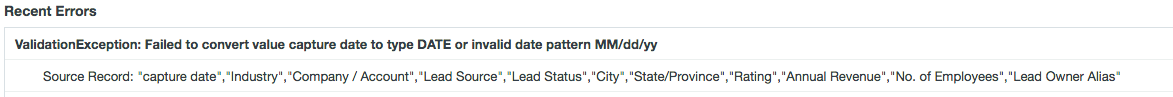

If the import job runs with dropped records, the icon on the page listing all import jobs displays an icon "Completed with warnings" You can easily find out what caused the problem.

To view dropped records:

The job details page showing Job History.

The details page showing the list of errors.

The error log shown when you click an entry in the list of errors.

The job run details page showing statistics and the job log file.

Use the links in the Job log file section to download the log file, download the job trace, or to report an issue to Datameer. When you click Report an issue, fill out the bug report and provide steps to recreate the error.

You have a great deal of flexibility in choosing when jobs are run. You can choose to run them manually or at a interval you specify.

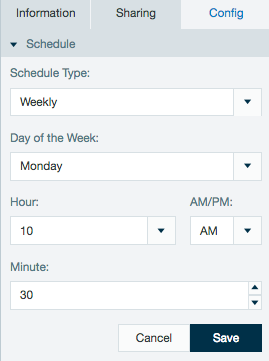

The schedule of an import job can also be viewed or edited from the inspector in the file browser.

Schedules created with non complex cron patterns are converted automatically in the inspector. Select Schedule Type and Custom from the drop down menu to view or edit the schedule cron pattern.

To edit an import job:

If just the partitioning has changed, the Save & Migrate button simply performs a migration rather than refetching data from the source.

You can create a copy of an import job. Here are some items to note if you copy an import job:

To create a copy of an existing import job:

The copy is created and named "copy of" plus the name of the original import job.

You can run an import job manually, or schedule it to be automatically run.

To run an import job manually:

Depending on the amount of data, this might take some time.

Note that this deletes the import job, not the original data. It also deletes the imported data within HDFS to be removed by the Housekeeping service. Deleting an import job can't be undone. It a workbook is referencing the imported data, the workbook might not work.

To delete an import job:

You can link data to a new workbook.

To link data to a new workbook:

You can view the count of processed bytes for each upload and their total volume counting towards the license term.

To view the processed bytes per single job execution and totals for that job configuration of the import jobs:

If a new license term starts and the import job is processed again, the count starts with a new total processed data amount.

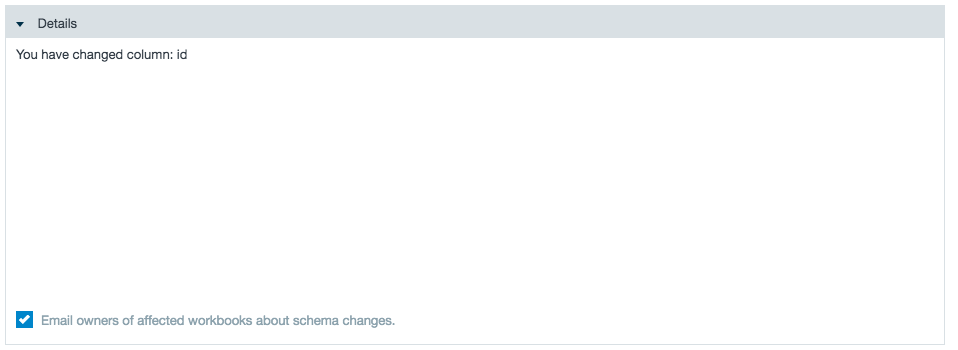

Datameer X gives you a notice when editing an import job if a schema change affects a corresponding workbook.

To view which workbooks are affected by a schema change from the import data:

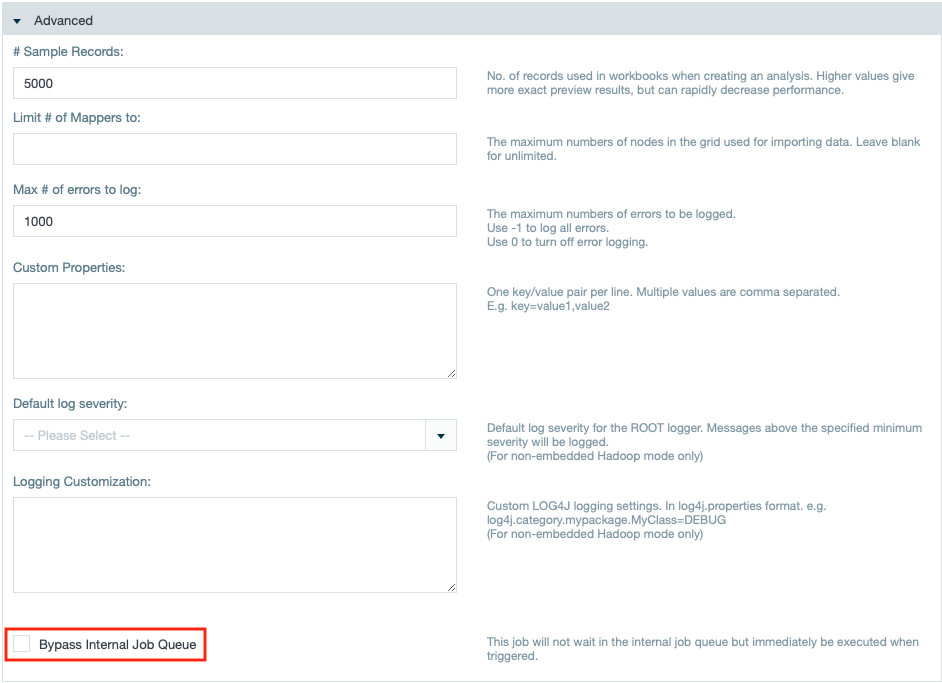

If needed, you can execute priorized import jobs by bypassing the Job Scheduler's queue. For that, according role capabilities are needed as well as cluster resources. |

To priorize an import job when configuring it in the import job wizard, simply mark the check box "Bypass Internal Job Queue". The import job will be executed right after finishing the import job wizard.